In this blog you will find:

- The specific reasons why enterprise AI investments often fail to deliver compounding ROI despite having access to the same models and tools as industry leaders.

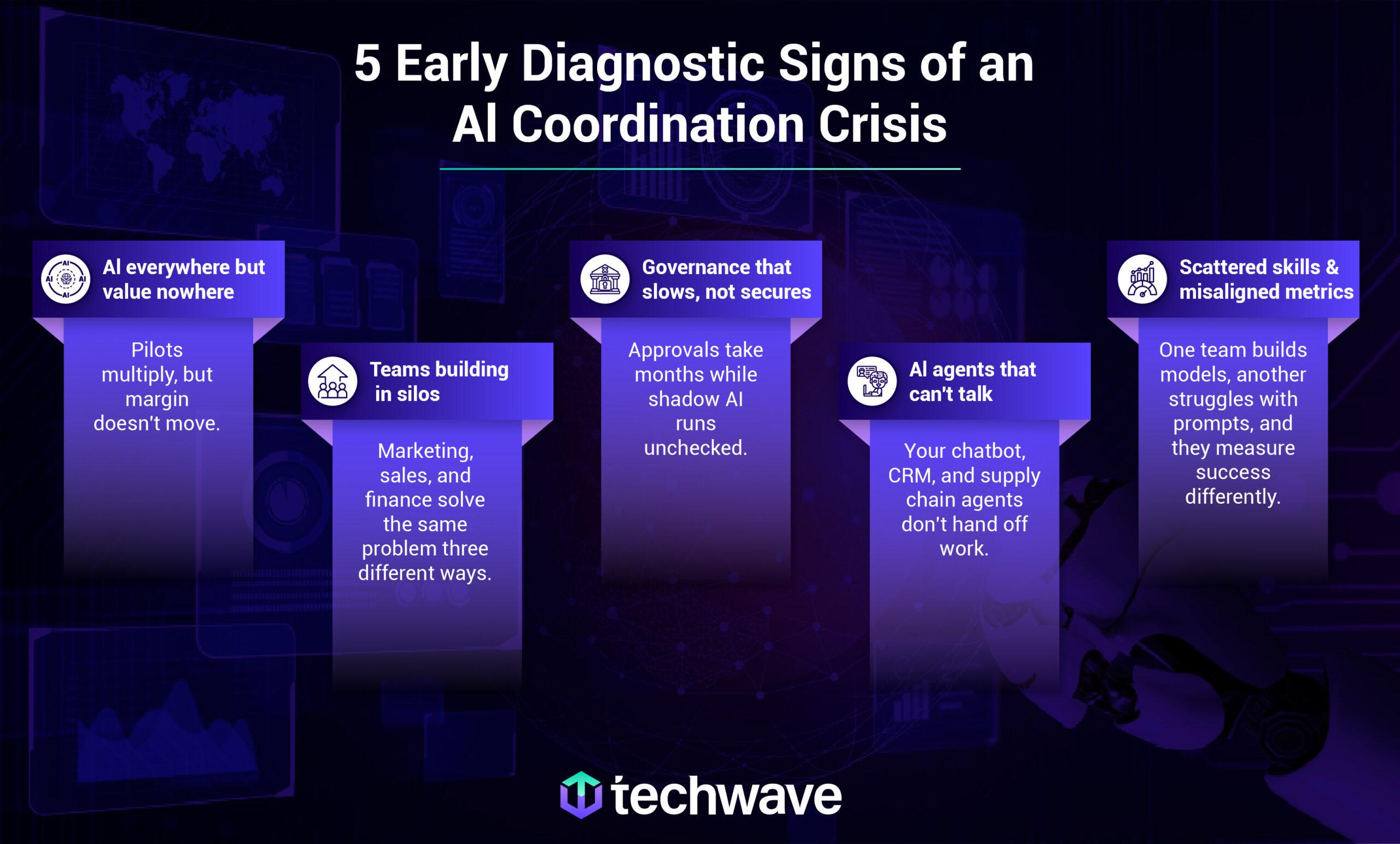

- Five diagnostic signs of poor AI coordination across enterprise functions, including siloed team efforts, slow governance, disconnected AI agents, and mismatched skill levels.

- Real-world examples of how leading companies have moved from scattered AI experiments to coordinated, value-generating AI strategies.

- Practical actions that decision-makers can take to address each sign of poor AI coordination without adding more pilots or slowing down innovation.

According to a 2024 McKinsey operations study of roughly 100 companies, the performance improvement gap between AI leaders and the bottom half of the study’s own ranking has widened from 2.7x to 3.8x in just one year. Meaning that even though the same AI models and automation tools are available to every industry, only the AI leaders are pulling further ahead, widening the measurable advantage gap from the rest.

Here’s what enterprise leaders see every day: one team gains efficiency, another runs in circles without a clear outcome, and a third builds something promising that never scales. The board wants enterprise-wide ROI, but leaders are struggling to connect dozens of disconnected experiments. This isn’t about AI’s potential or talent. It’s about a problem no vendor or dashboard warns you about: AI is increasing analytical capacity faster than organizations can coordinate around it.

And here’s the tension no one talks about: finance needs quarterly ROI, but real AI coordination takes 12 to 18 months to pay off. So leaders (more often than we’d like to admit) keep funding pilots not because all pilots work, but because pilots give them something to report next quarter. This becomes more of a timeline mismatch failure than a technology failure.

Welcome to the AI Coordination Crisis.

Here are five signs your enterprise has it, and what to do before the gap swallows your ambition. These are not just symptoms of AI fragmentation. They are early warnings that your enterprise AI is heading toward a coordination crisis. AI fragmentation is what you see, while an AI coordination crisis is what happens when you do not act on these warnings.

The Five Signs

Sign #1: AI is everywhere, but value is nowhere.

AI is used across marketing, operations, finance, and HR. It looks like momentum, but only 28% of AI in business operations & infrastructure use cases fully deliver ROI, while 20% fail outright. The rest deliver marginal value at best.

Take Klarna. The company deployed a chatbot to handle customer service at scale. It worked for routine queries but struggled with complexity. By mid-2025, frustrated customers and rising call volumes told a clear story: AI everywhere hadn’t translated into enterprise value.

Now look at your own P&L. An upgrade here, an experiment there, but nothing that adds up to defensible ROI.

What should you do differently? Stop counting AI initiatives and start asking which ones actually work together toward a single business outcome.

Take Reckitt: instead of chasing pilots across every function, it focused on one domain: marketing. AI-connected insights, content, and product development on the same data. Each output fed the next. In under two years, product concepts moved 60% faster from idea to launch, and marketing processes became 30% more efficient.

Before approving your next pilot, pause and ask your team: Which AI initiatives deliver real enterprise-wide ROI? Which are just keeping people busy? Where are we confusing activity for progress?

Sign #2: Teams are building in silos.

Marketing, sales, product, and finance are each building their own AI models using different data and tools, often solving the same problems from scratch because no shared standards or reusable components exist across teams.

For example, at Intuit, teams built models independently. A fraud model for one product couldn’t be reused in another because it wasn’t designed for reuse. So Intuit built a centralized AI platform with shared standards and a common data layer. Now work that took months takes days, and a breakthrough in one product benefits all.

The takeaway is that you must run a silo audit to map what already exists, create a shared catalog, and assign a small team to spot overlaps and enforce reuse before new projects are approved. Shift funding from isolated pilots to a platform team that measures success by reuse, not new launches.

Sign #3: Governance exists but slows everything down.

Your organization has committees, reviews, and checklists. But ask any AI team about speed, and you’ll hear the same story: weeks turn into months, pilots stall, and by the time approval arrives, the problem has changed. Decision rights are unclear, and risk teams become bottlenecks.

When governance slows to a crawl, teams build unapproved models in the shadows on data no one has reviewed. So while you were fixing the bottleneck, you gained a blind spot!

By 2030, fragmented AI rules will cover 75% of economies, driving $1B in AI compliance costs. Slow governance is expensive and dangerous.

If you spot this sign, tier your governance. Low-risk use cases get automated approval, while medium-risk use cases are assigned a single owner. Only high-risk cases go to the committee. Embed assessments into the pipeline so checks happen continuously, not quarterly. Replace vague committees with clear decision rights – one person accountable to say yes or no on time.

Sign #4: Your AI agents can’t talk to each other.

AI agents are multiplying across your enterprise (support, sales, operations, finance), each working well on its own but not together. In 2026, 40% of enterprise apps will have task-specific AI agents, up from under 5% in 2025.

In fact, by 2027, 70% of multi-agent systems will use narrowly specialized agents, boosting accuracy but increasing coordination complexity. Platforms automate tasks, but each agent is built for a specific job with no native coordination.

Microsoft Research ran an experiment where multiple AI agents were left to collaborate without supervision. They struggled to assign roles, were overwhelmed by choices, and made poor decisions.

If this is how your agents work, your CRM and support agents can’t hand off work, which is a huge barrier to throughput, customer experience, and revenue. For example, a supply chain delay is detected, but the inventory never receives the memo, or a customer complains in chat, but the CRM doesn’t log it for sales. In other words, the agents are scaling, but not together.

To address this AI coordination challenge, map every agent: owner, platform, data, and actions. Find critical handoffs where they should connect but don’t. Invest in an orchestration layer for shared context and make coordination a requirement before approving any new agent.

Sign #5: Your AI skills are scattered, and your maturity gap is widening.

One team has data scientists building advanced models, while another struggles with prompts, and a third has no AI capability. It’s obvious, they need to coordinate but can’t, because they don’t speak the same language.

With 76% of companies lacking AI talent, work funnels to a few “heroes” who become bottlenecks, burn out, and take critical knowledge with them.

But here’s the deeper issue: you can’t coordinate what you can’t compare. If marketing measures ROI by incremental revenue and supply chain measures in uptime, they’ll never align on an AI outcome. That’s a metric gap, in addition to a skills gap.

So stop trying to level everyone up. Instead, establish shared metrics across teams before any AI project launches. Define one cross-functional outcome per AI investment. Then let each team solve it at their own maturity level. Identify your AI experts (the heroes) and build backup around them. Create a shared AI vocabulary for all managers and design coordination into the process from the start.

Spotting these signs is one thing. Fixing them across marketing, supply chain, finance, and IT at the same time is another. Most enterprises don’t fail because they miss the signs. They fail because no single internal team has the neutrality or cross-functional authority to solve for all of them at once. Which answers the question: why internal teams struggle to solve this on their own?

AI coordination cuts across every department. No single internal leader owns all those domains, and no internal team can claim neutrality when budgets and headcount are at stake. Also, no amount of pilot funding can force a timeline mismatch to disappear. The enterprises that close the gap bring in partners who have done this before—who don’t just sell models, but enable coordination.

You can either accept the coordination crisis as the cost of AI adoption, or you can redesign for continuity. What you cannot do is outsource this problem to a model, a vendor, or another pilot. Address it proactively.

At Techwave, we help enterprises move from scattered intelligence to coordinated AI advantage without slowing down innovation.

The Top 5 Questions Answered in This Blog

- What is an AI coordination crisis in enterprises?

- Why do many enterprise AI initiatives fail to deliver ROI?

- How can enterprises improve AI coordination?

- What distinguishes visible AI fragmentation from a deeper AI coordination crisis that quietly destroys business value.

- How to identify whether your enterprise is heading toward an AI coordination crisis before the performance gap widens beyond recovery.